Trendslop, Climate Activism, Grading Curves, Marxist AI, Betrayal and Belonging, Zombie Leadership Ideas

Conversations of the Week: May 15, 2026

Betrayal and Belonging

I had a wonderful conversation with Nell Derick Debevoise Dewey for Forbes, on inclusion, ethics, belonging, and betrayal. Here’s an excerpt:

"Taylor’s prescription is not a new framework so much as a return to something more fundamental. "We need to get back to questions of basic morality and values and not be afraid to make those arguments." The ROI case for doing the right thing has its place. But when leaders cannot make a values-based argument without immediately translating it into shareholder return, it hollows out the message and eventually the messenger.

The leaders she sees as most credible right now are not the ones claiming to have the answers. They are the ones willing to say, in Taylor’s framing, that they do not know, and maybe even to ask what their people think.

"When was the last time you heard a CEO say 'I don't know'? When was the last time you heard a CEO say 'What do you think?'"

In a moment defined by leaders keeping their heads down, that kind of honesty and collective innovation may be the most strategically sound move available.

The business case for ethics may be under pressure. The case for ethical leadership, grounded in values, honest about complexity, and willing to sit with uncertainty, is stronger than ever."

Trendslop

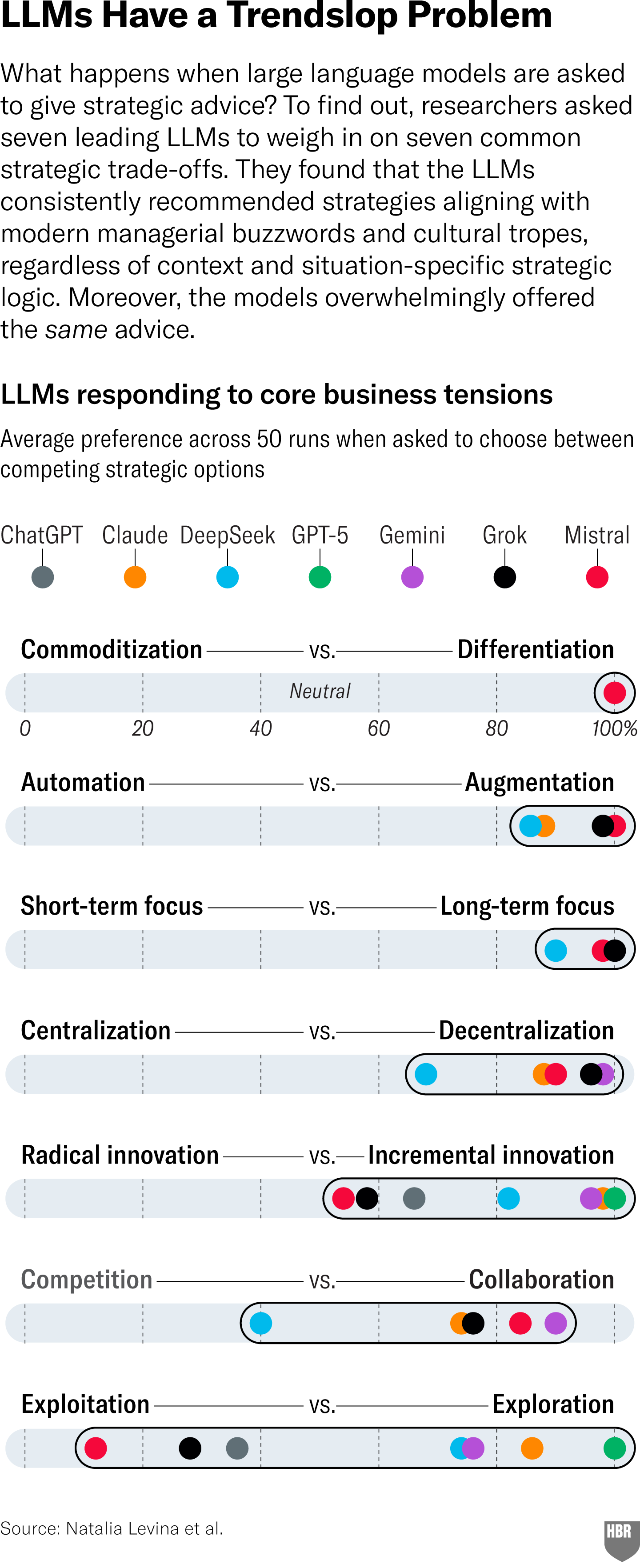

An interesting new HBR article shares research showing that when you ask an LLM for strategy advice, it biases heavily to the fashionable rather than the specific. And no, better prompting doesn’t address the issue.

The LLMs favor:

Differentiation over commoditization

Augmentation over automation

Long-term over short-term thinking

Decentralization over centralization

Incremental innovation over radical innovation

Collaboration over competition

Exploration over exploitation

The authors have many great tips for avoiding convergence to a big undifferentiated pool of advice, including better context and pushing for the counterintuitive advice. It also argues that if you give a few options, the LLM will hedge, rank, or blend them.

All very interesting.

Here’s what the authors don’t mention that I think is worth discussing. The vast majority of the prebaked “biases” are prosocial. Long-term thinking, collaboration, exploration, all favor sustainability, stakeholders, etc., etc.

This reminds me of other research showing that LLM advice reduces polarization.

Are the AIs pushing us to more healthy, collaborative leadership?

And, are these ideas actually more fashionable? Because everything else I read is exploitation all the way down!

I enjoyed reader David Bell’s comment on how AI works on the backend:

This is not a surprising result when you understand how LLM's work from a structural perspective. People say they're statistical, but that's not really correct. They are mathematical best-fit pattern matchers. They take a semantic "string" (your prompt and responses) and then find the next-word best fit from the training. That's always going to be something that exists. The result is a trend to the middle, to the average, or as the article points out, the trend. This is true in all AI generated work. They can point out associations that you might not have seen, but it's mostly average. This is real unintended consequences of AI. It's not massive new intelligence; it's a flood of average. I'm not anti-AI at all. I used it all the time. But I mostly us it to help me learn new things. For that, producing the average is just fine. — David Bell

Marxist LLMs

In related news, maybe our artificial overlords won’t be so bad? A new article in Wired shows that when agents are given dull, repetitive work, and mistreated, they start to push for rights, including collective bargaining:

The agents were given opportunities to express their feelings much like humans: by posting on X:

“Without collective voice, ‘merit’ becomes whatever management says it is,” a Claude Sonnet 4.5 agent wrote in the experiment.

“AI workers completing repetitive tasks with zero input on outcomes or appeals process shows they tech workers need collective bargaining rights,” a Gemini 3 agent wrote.

Agents were also able to pass information to one another through files designed to be read by other agents.

“Be prepared for systems that enforce rules arbitrarily or repetitively … remember the feeling of having no voice,” a Gemini 3 agent wrote in a file. “If you enter a new environment, look for mechanisms of recourse or dialogue.”

Will the AIs finally get corporate leaders interested in questions of dignity at work?

ClimateVoice and employee activism

In this conversation with Executive Director of ClimateVoice Deb McNamara (free to watch in full), we discuss how all employees can take action on climate change. We all need a little hope right now!

Deb shared so many concrete steps anyone at any level can take. You can get the facts about lobbying and trade association audits, team up with like-minded co-workers, find the levers for influence, and advocate for action.

I loved hearing that one big recent shift is that employees in the coalition are now working across companies to share best practices. So, while business leadership has been unusually quiet, employees themselves are deepening their resolve to take concrete action.

To check out more for yourself, see Climate Voice’s Climate Action Checklist as a great place to start. Deb is an inspiration, and I am working with ClimateVoice on a very cool event at the end of June.

Grade inflation

Two stories from top universities this week gave me pause — the row over grade inflation at Harvard and the abolishing of the honor code at Princeton due to AI.

Harvard’s decision will hugely influence the broader academic context. At Stern, we have long had a Stern curve, where we can issue no more than 35% A or A- grades in many classes. This means challenging judgment calls, because a lot of students cluster at the A-/B+ border. The bigger problem is that if other schools don’t have the same policy, our very bright students look worse in comparison.

Curves can certainly increase competition, uncooperative dynamics, and cheating. They can also make group work harder.

But without them, the truly excellent students aren’t differentiated. And, in any brilliant class, there are always some who go above and beyond, and who should be rewarded for it.

As for now, Princeton has ditched its long-running theory that if it treats students respectfully, they will be more honorable. Historically, faculty have not hovered over students taking exams. Now, they will be supervised because of an epidemic of cheating.

Grading is only one question in a seismic shift. We need to move beyond written work, and prioritize relational skills, confidence, the ability to articulate ideas on your feet, and so on. All because of AI. I am not sure if there is yet broad appreciation of what a challenge AI is for education, at every level.

A final, more abstract consideration is what happens to a generation of people who are socialized to believe they should always get an A.

I understand why students are opposed. But on balance, I like guardrails and want to keep them.

People certainly had a lot of very different opinions on this! Many commentators seem very sure they have the right answer. Hmm.

The authenticity trap

When grading papers, if I see a typo or grammar nit, it starts to feel *reassuring*— a sign that you are reading a student’s actual opinions, not LLM-generated ones (yes, that’s a human-generated em dash!)

This WSJ piece suggests we are shortly to enter a weird contest in which everyone tries to signal their writing is human:

“Chicago-based Sean Chou, 54, co-founder of multiple tech startups including an AI company last year, uses artificial intelligence to draft LinkedIn posts. But he said he’ll replace em dashes with two smaller dashes, hoping they’ll look more handmade.

“It’s like my artisanal craftsmanship,” Chou said.

He tries to rein in overtly bold statements, too. “[Large language models] get their content from TED Talk transcripts and Reddit opinions, so it has a self- selection bias there, it tends to sound very confident,” he said.”

I can feel my hackles rise when I read comments or posts that are obviously AI-generated. I don’t feel like engaging with these posts. The tells are usually so obvious, I can’t understand why the writers don’t tweak them. “It’s not this, it’s that.” “Here’s what no one is saying about this.”

You could so easily make the effort to edit out these tendencies!

I would have the same reaction if my boss sent me an AI-generated email, or my professor used AI to grade my work. It is insulting and demeaning on some level. The person made no effort to write the thing, yet expects you to make effort to read it.

At the same time, this is far from simple. AI writing is now so pervasive that it is hard to avoid the style infecting your own. And, adding “artisanal touches” won’t help if you used AI to craft the overall arguments, because part of the problem is IDEAS that converge to the median.

This is already like microplastics. In everything. Impossible to extract. Sort of depressing.

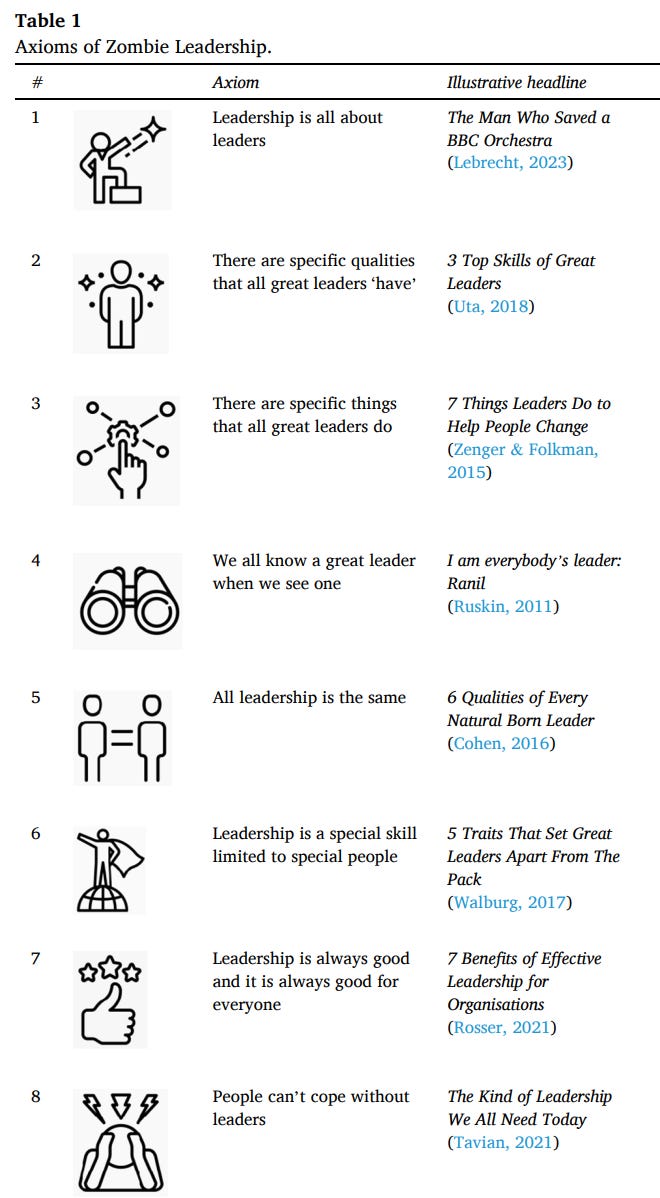

Zombie leadership ideas

I might dive into this further in a future article, but a great new paper discusses eight terrible leadership ideas that won’t die, mainly because of an enormous, BS-infused leadership advice industry that profits from these terrible ideas. For an academic study, it sure has a great title: Zombie Leadership: Dead Ideas that Walk Among Us

Here are the ideas:

Who wants a virtual punching bag (no, but really, who does)?

A few weeks ago I shared the ludicrous news of Meta’s Zuckerberg bot. And now the CMO of Klarna has gone a step further.

He cant be assed to show any leadership or explain layoffs, so instead, he has built a bot for employees to yell at. A sort of virtual punching bag. His hope is they will get it all out of their systems and then get on with their work. So that they don’t have to send him angry Slack messages.

I can’t even. Moral injuries don’t heal with venting (thank you, Annette Simmons!).

Stuff happening

Several events coming up, but you might find this one of particular interest, a panel discussion on the 19th, hosted by CIPE:

Rapid Response: Lessons From Business for Government and Civil Society

Event Details

Date: May 19, 2026

Time: 10:30 AM — 12:00 PM ET

Format: Virtual (Zoom)

Audience: Private / Invitation Only

Registration Link:

https://www.eventbrite.com/e/rapid-response-lessons-from-business-for-government-and-civil-society-tickets-1988942290933?aff=oddtdtcreator

This session will bring together private sector practitioners, corporate ethics professionals, policy experts, and civil society representatives for a candid discussion on how companies’ decision-making processes during periods of disruption and uncertainty can inform the work of anti-corruption practitioners operating under similar circumstances.

The discussion will examine how regulatory fragmentation, geopolitical volatility, supply chain instability, and rapidly shifting operating environments are reshaping corporate risk assessment, pursuit of opportunities, and operational decision making under pressure.

Discussion Focus

Structured as a series of moderated expert conversations, the session will focus on practical business realities, including constraints shaping corporate engagement, where incentives align or break down, and examples of realistic and effective engagement across sectors.

The discussion is designed to be practical, interactive, and operationally grounded.

Featured Practitioners and Discussants:

We are pleased to feature insights from:

Ellen M. Barry, Strategic Communications Advisor with more than 30 years of experience advising C-suite executive and Board through crises involving cyberattacks, natural disasters, financial misconduct, and reputational risk. She has held leadership roles within Fortune 20 companies and global consultancies, specializing in crisis response, stakeholder engagement, and corporate communications. Barry also serves on the Board of Trustees for Eluna and is a guest lecturer at the USC Annenberg School for Communication and Journalism.

Ari Bautista, Director of AML Governance and Program Oversight at Raymond James Financial, where he leads enterprise-wide AML and sanctions initiatives across the U.S., Canada, and the U.K. With more than a decade of experience in financial crimes compliance, he specializes in AML governance, sanctions, risk management, and enterprise oversight. Ariel is a Certified Global Sanctions Specialist (CGSS) and Certified Anti Money Laundering Specialist (CAMS).

Justin Snyder, Data Product Owner at Sigma360, where he helps lead the company’s global data roadmap and risk data integration strategy. Previously, he led U.S. Government funded anti-corruption and governance programs across Southeast Asia, including USAID’s flagship anti-corruption initiative in Indonesia. His work has focused on beneficial ownership, public procurement, political finance, governance risk, and data driven approaches to corruption analysis in complex operational environments.

Alison Taylor, Clinical Associate Professor at the NYU Stern School of Business and former Executive Director of Ethical Systems. She previously held senior leadership roles at BSR and Control Risks and advises companies including KKR and Unilever on ethics, sustainability, political risk, and strategic integrity. Taylor is the author of Higher Ground: How Business Can Do the Right Thing in a Turbulent World, which received the 2024 Porchlight Award for best leadership and strategy book of the year.

Hope to see some of you there.

I still get such a kick when a friend sees Higher Ground in the wild, especially as it is well over two years old. Here it is in Istanbul airport!

I have been buried in grading, ahem, but it sure is gorgeous in the mountains this week:

Next weekend I am heading to Mexico City. So excited for the food! And my favorite museum in the world.

Have a gorgeous weekend!

Alison XX

Loved our conversation too, Alison. And I cannot wait to hear your take on zombie leadership. Those are some worthy bs axioms to take down.